SurveyMonkey

Concept testing product

Context

Co-lead Senior Product Designer · 2018

Services: Design strategy · Research & testing · UX/UI design · Mentorship & leadership · Stakeholder communication

Status: Launched — available on the SurveyMonkey platform as Market Research Solutions.

Summary of work

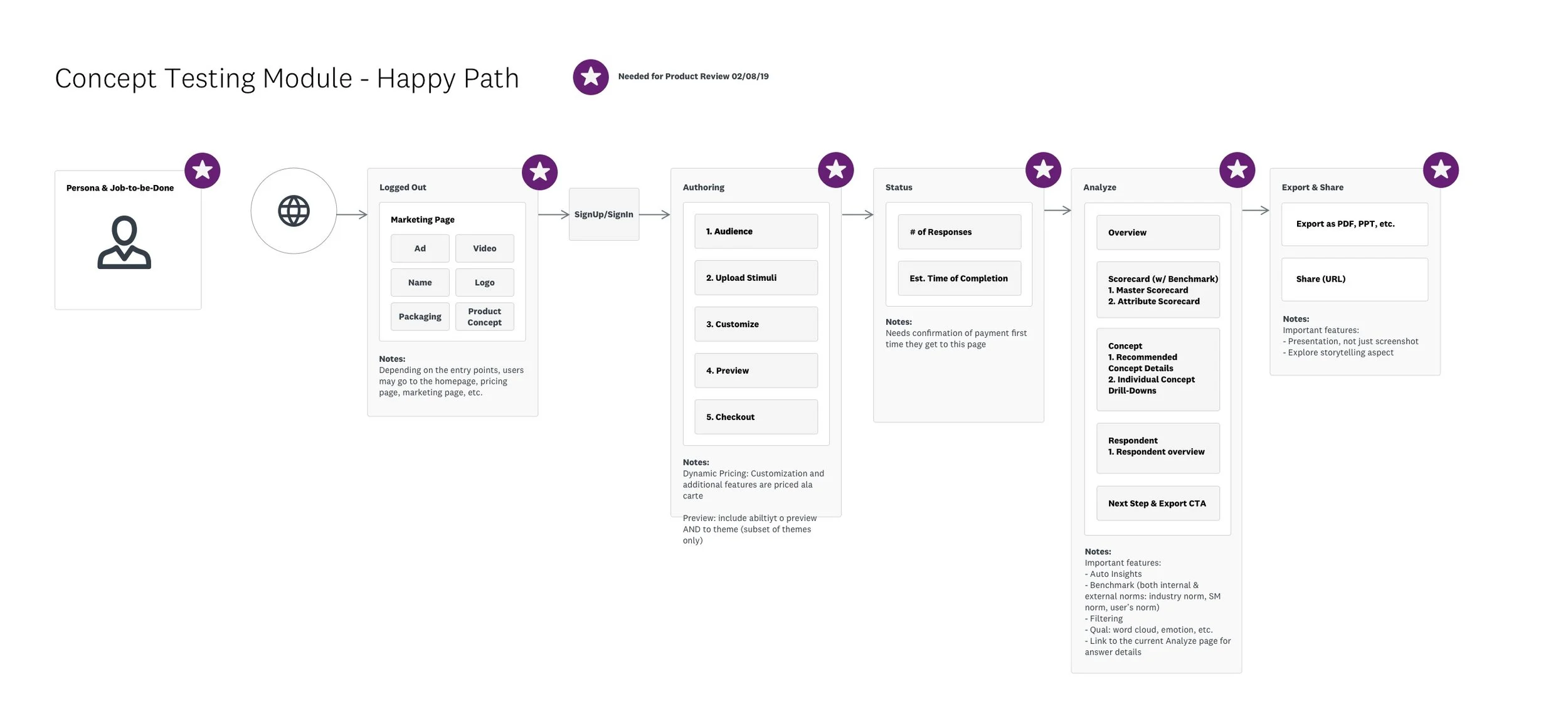

We created a tiger team within the SurveyMonkey company in order to strategize and build out a new product on our platform: Concept Testing modules that will allow users to leverage our experience and methodologies with the flexibility of a competitive, DIY testing product.

Status

This project has been launched and can be found here on the SurveyMonkey website. Here's the product announcement if you want to check that out, as well.

Overview

At the time we embarked on building this product, the SurveyMonkey platform provided rich features for all users, but we were not orienting our survey tool to address specific user needs. What if we could stay horizontal to serve a large user base, but also go deep to deliver higher value to our users and business? We imagined users coming to SurveyMonkey to choose a product that is highly tailored to their specific needs and workflows, right on our core survey platform.

My contributions

As a senior designer on this project, I worked closely with stakeholders and cross-functional teams to define and execute this project.

Workshops & strategy

Facilitated design workshops and collaborative sessions with cross-functional teams to drive feature definition, goals, and strategy.

Design direction

Planned, directed, and orchestrated multiple design initiatives throughout the project lifecycle.

Mentorship & leadership

Managed and coached designers across teams, levels, and disciplines.

User advocacy

Advocated and applied user-centric processes in collaboration with cross-functional teams, keeping users in focus through research, design, and testing.

Setting the context

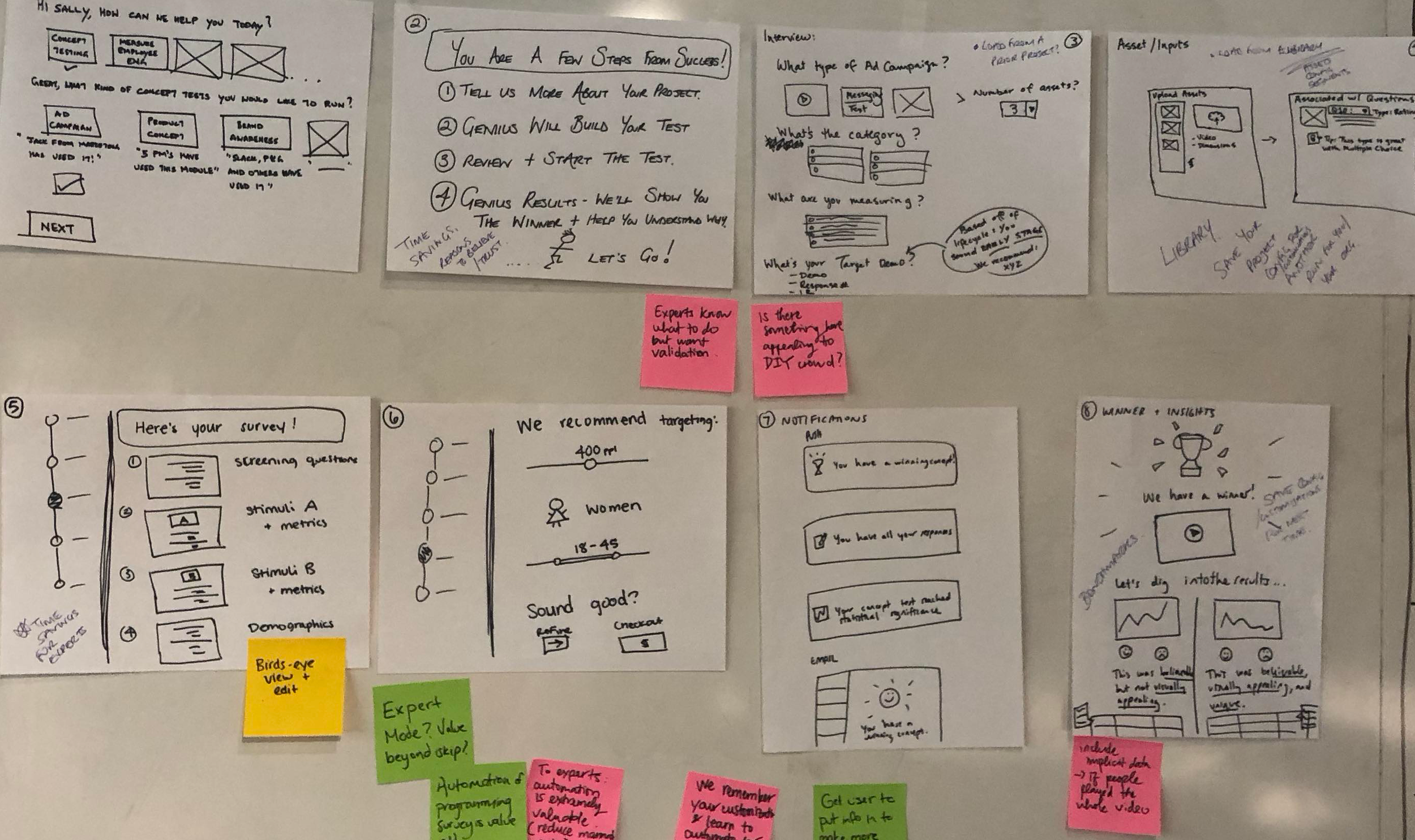

We started with 12 use cases our strategy and research teams had generated for our consideration.

We designed, proposed, and led a two-day workshop with 15+ attendees from engineering, product, design, research, strategy, marketing, and customer service. The topics we covered gave valuable insights into historical context, business rationale, company strategy, research findings, and goal alignment.

Design exploration

We led photo-ethnographic groups to generate design frameworks and solution pitches.

User empathy

We identified and tested our most and least loyal customers and their journeys. The biggest shift that happened was that we learned we were losing the most motivated users at a critical moment in their journey.

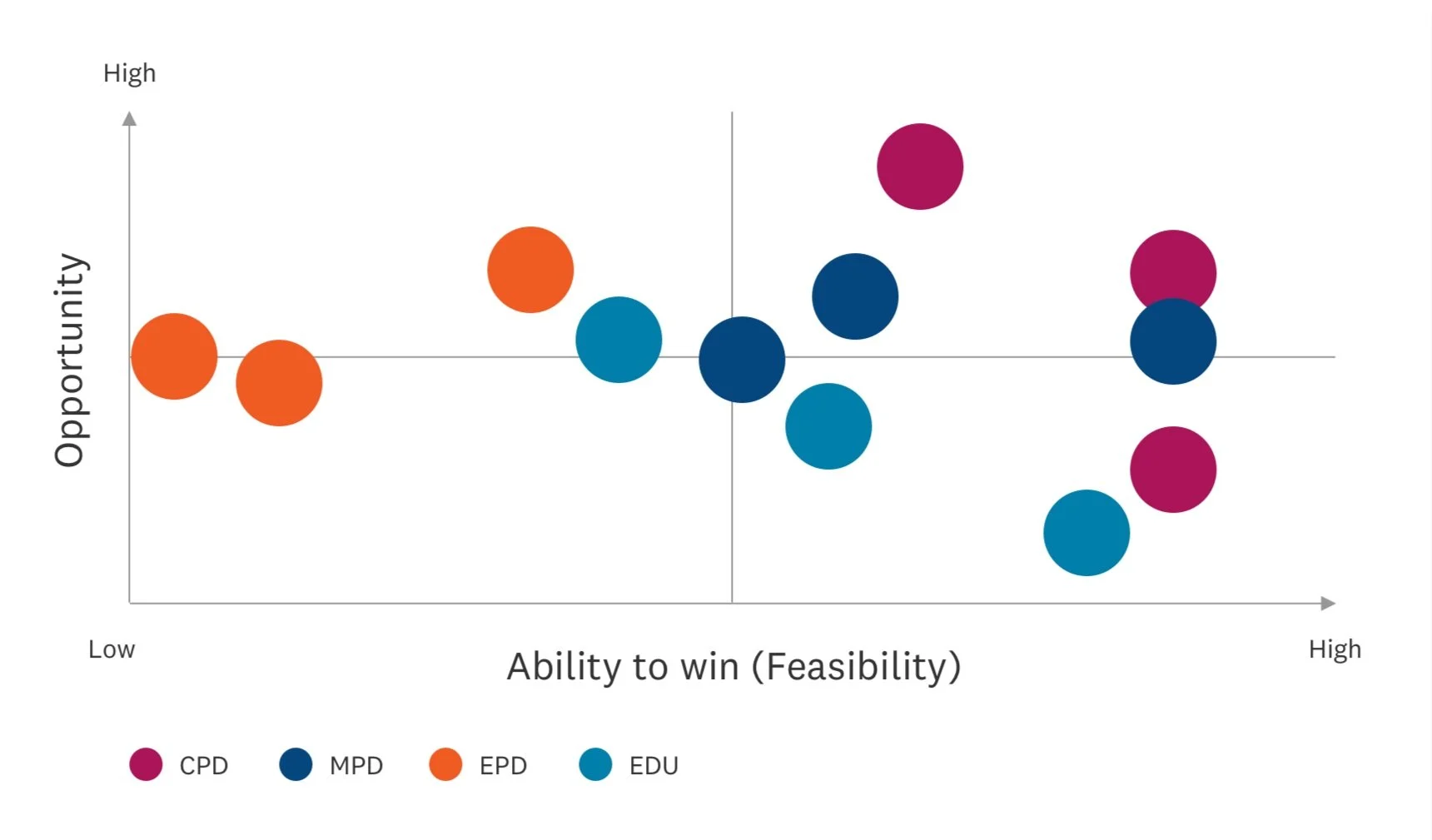

Prioritization matrix

This workshop was designed to pinpoint the use cases that offered the most opportunities for our audience and to help us make tradeoffs between competing directions.

Aligning perspectives

We generated data to create an evidence-based foundation for our direction. We partnered with collaborators to communicate the findings and recommendations and to align on our hypothesis before moving forward.

Concept testing product direction

Myself and the design lead (Quan Nguyen; now working at Meta) had been tasked to lead the user experience and design — in partnership with a product owner, engineering lead, and program manager — of a new product that would live under the umbrella of the core survey experience, but with a different offering to a new customer base and persona.

Market opportunity to address

Key user pain points

Changes are constant

By the time a test is complete, evolving trends have often already shifted the go-to-market timeline.

Market research is costly

Entire annual budgets are consumed by one or two projects, and long turnaround times compound the financial risk.

Insights are complicated

Reports are long, dense, and packed with distilled data — making it hard to know what's worth sharing with stakeholders.

Reports aren't digitally native

Reports delivered as printed documents or PDFs force teams to recreate the work just to present findings — a time sink that adds no value.

Wait times are too long

Agency engagements can take up to six months to deliver findings, stalling decisions that can't afford to wait.

Solutioning

Rigorous user testing

Over the course of eight months, the lead designer and I led weekly user research sessions for 16 weeks (almost) consecutively.

This allowed us to gain insights on concepts quickly and move confidently in directions that tested well with our users.

Solutioning

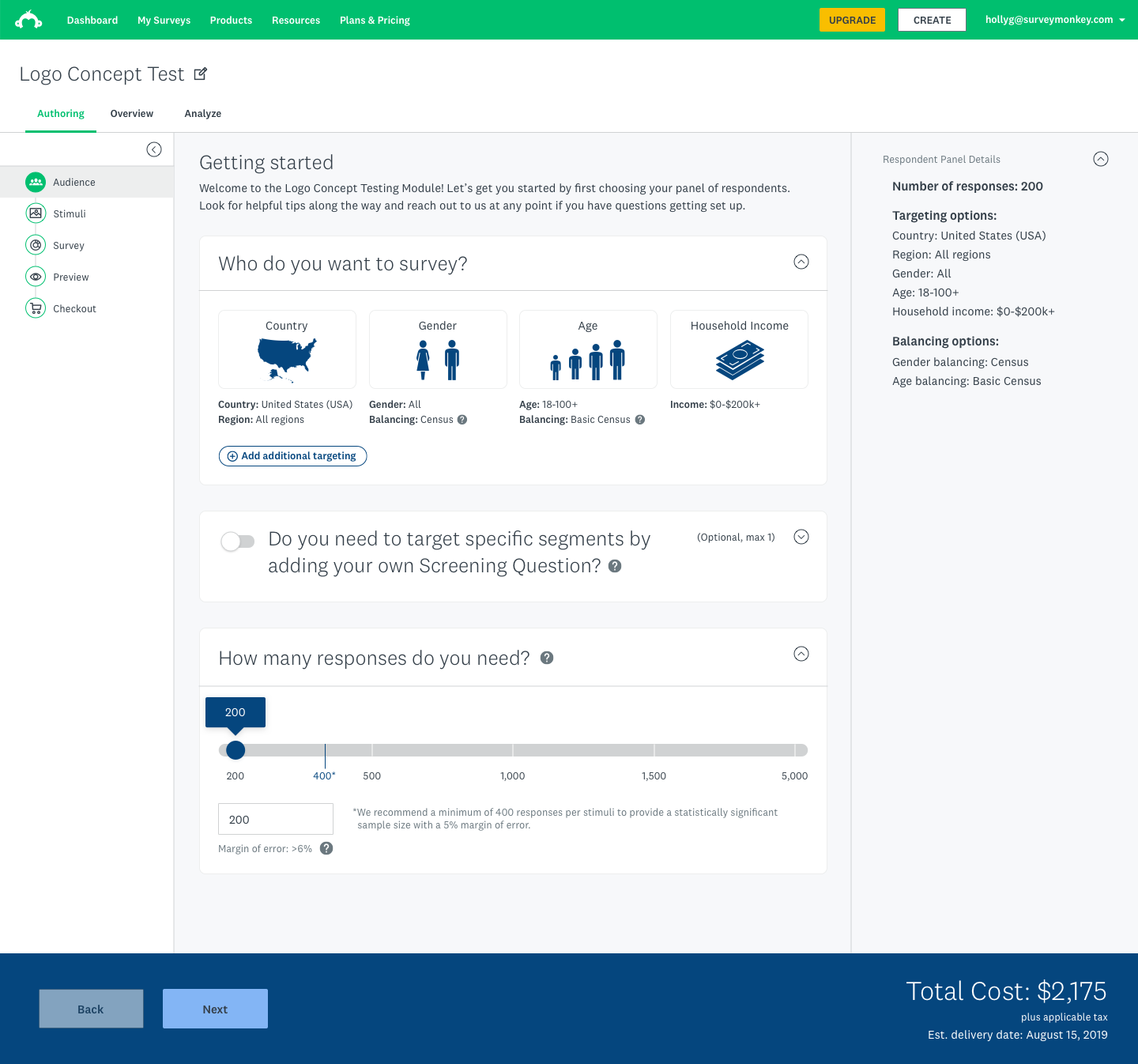

Targeting the correct audience

I led the design of audience selection, which utilized our unique panel of research participants across a wide range of demographics. This allowed us to target respondents based on wide-ranging but specific criteria, including multilingual and international respondents.

This portion included target demographic selection, screening questions, and setting the number responses they'll need. The dark blue bar at the bottom keeps a running total of the project.

Solutioning

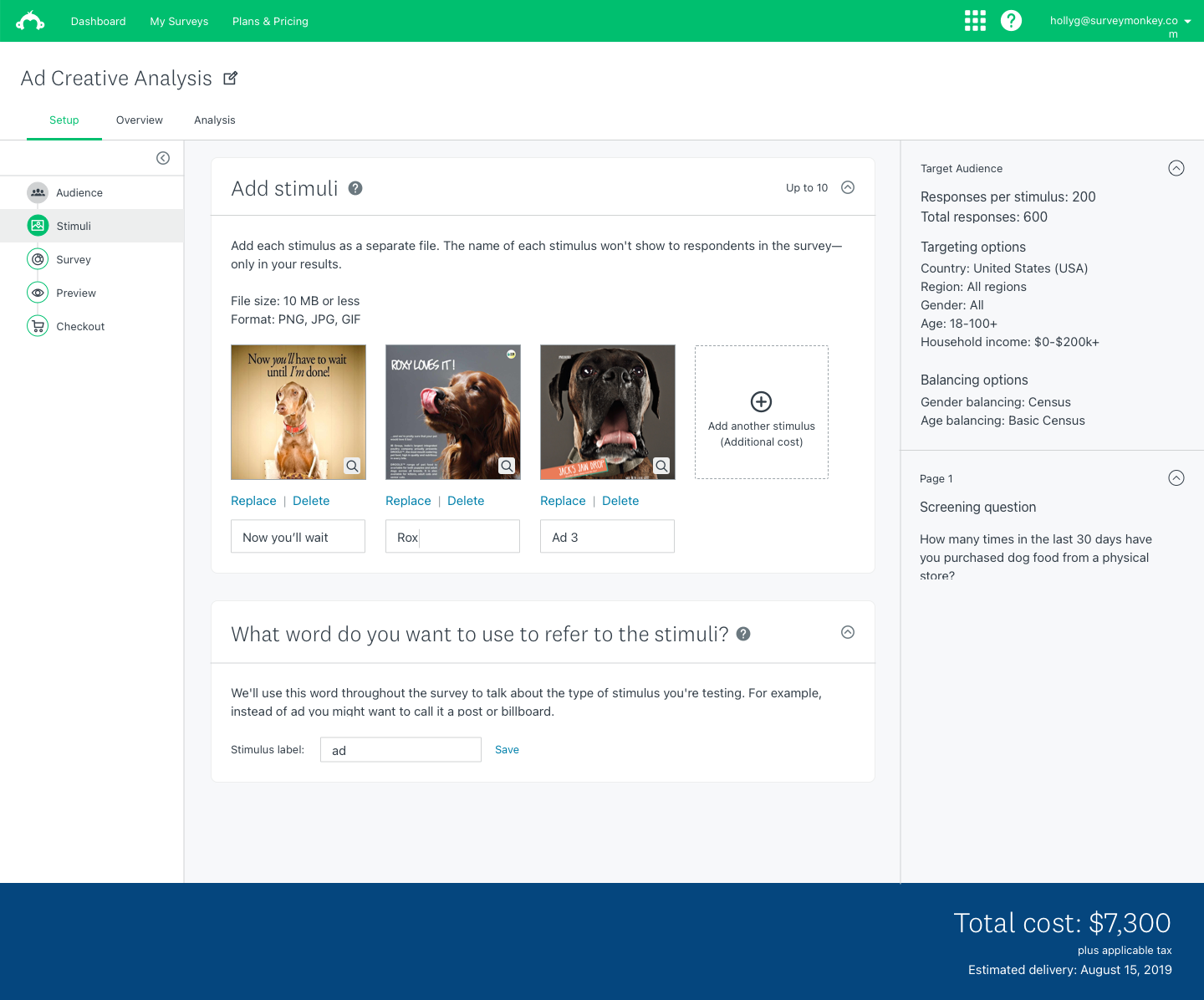

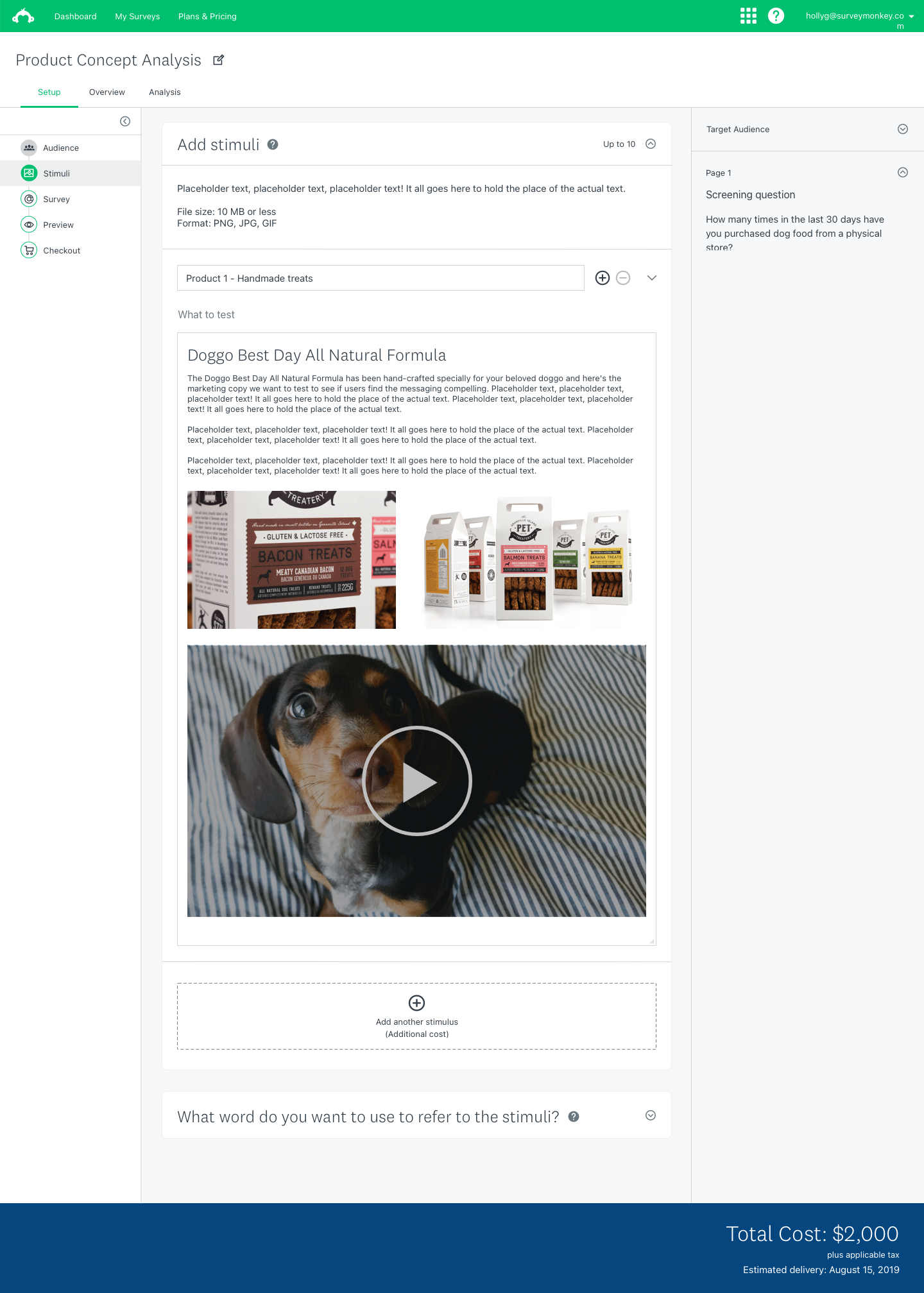

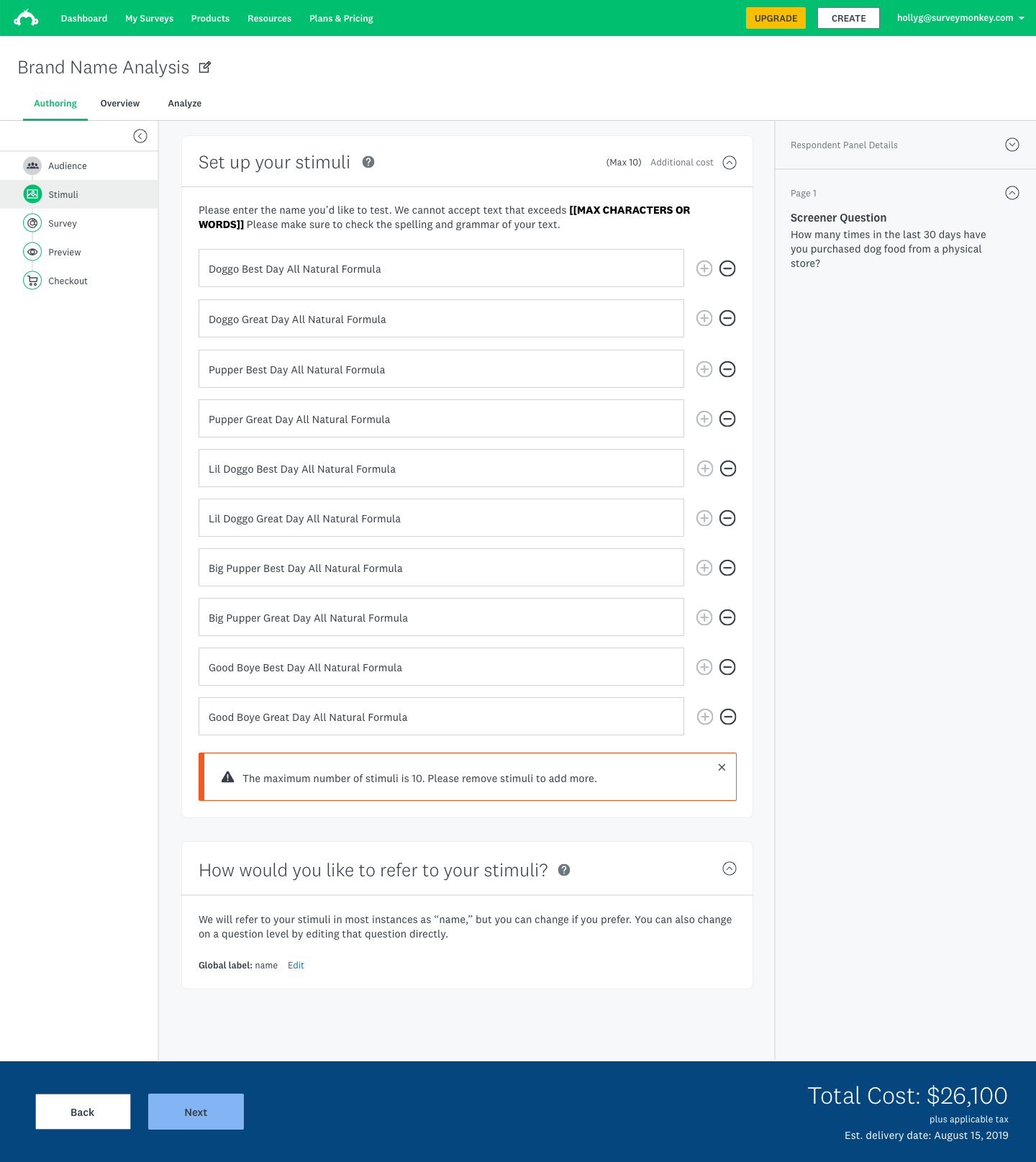

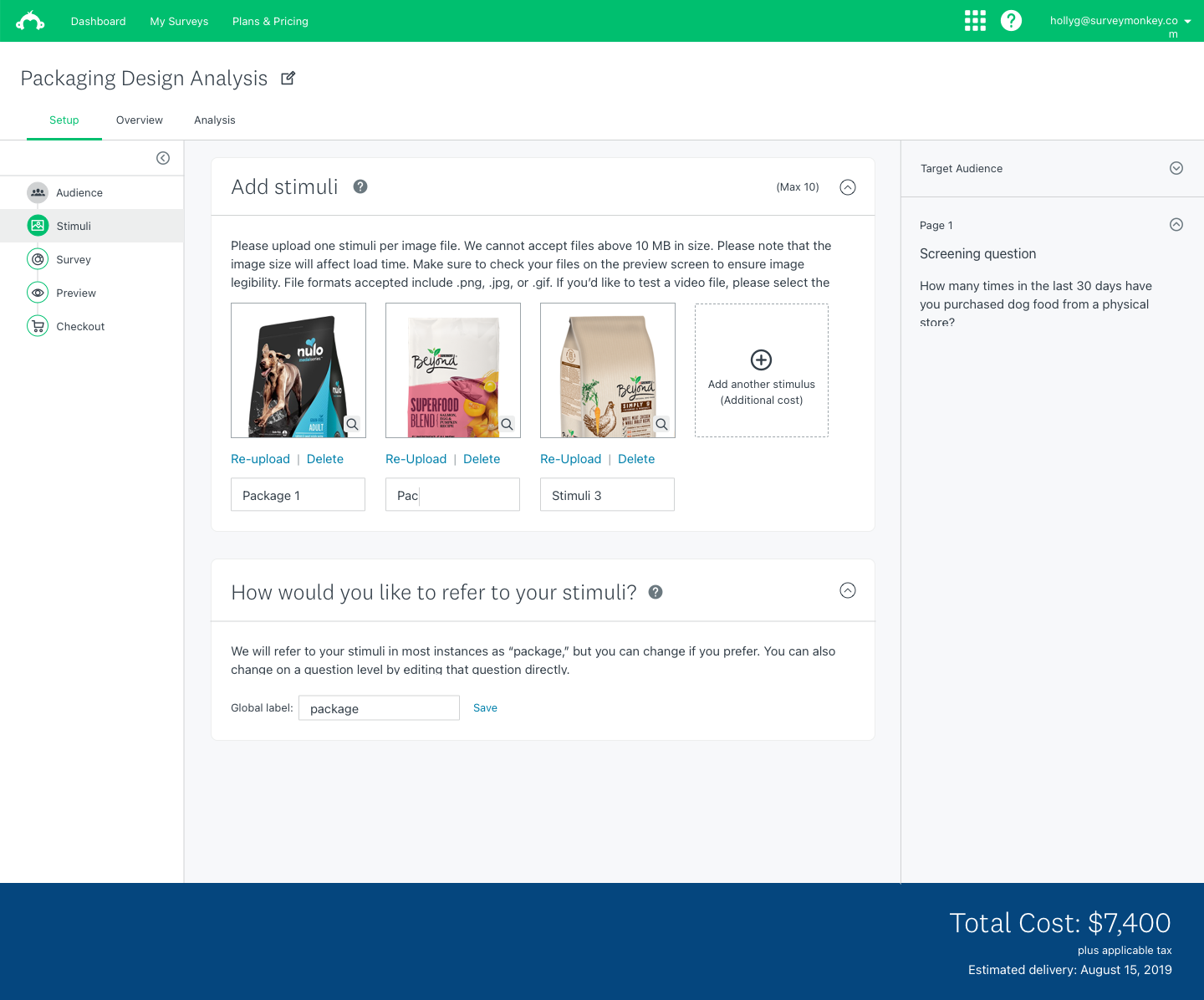

Setting up stimuli to test

I also designed the experiences for uploading stimuli and authoring the test that will go out to participants.

These flows included adding all stimuli users wanted to test for ads, product names, videos, images, and packaging design.

Solutioning

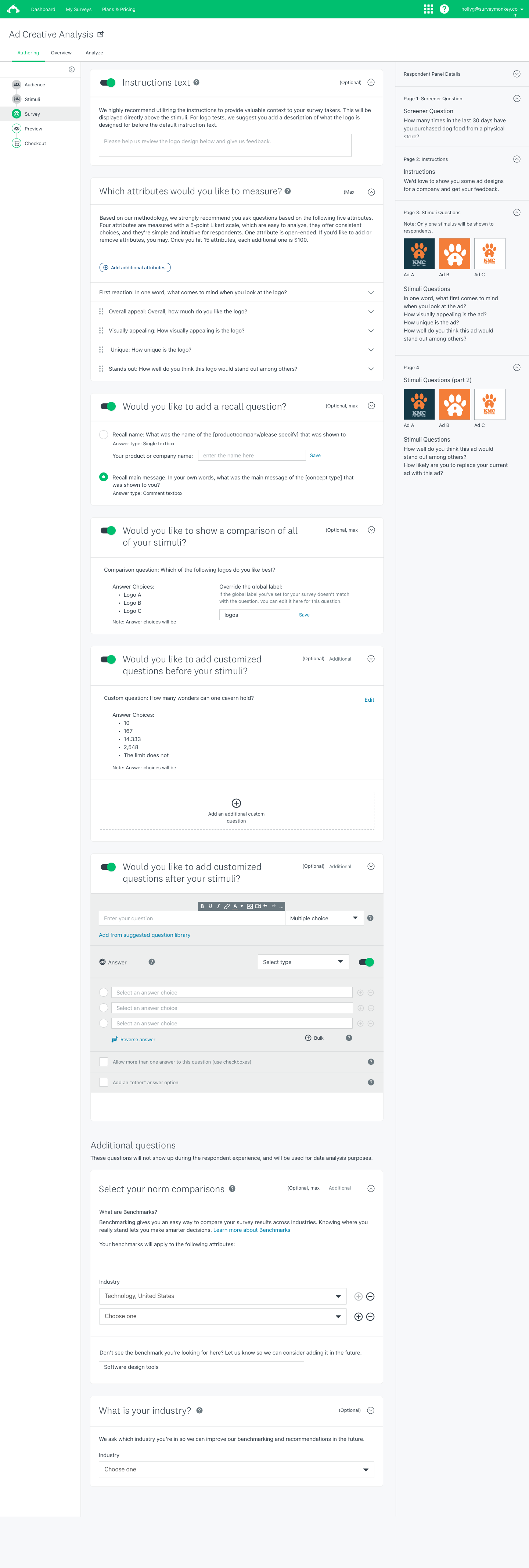

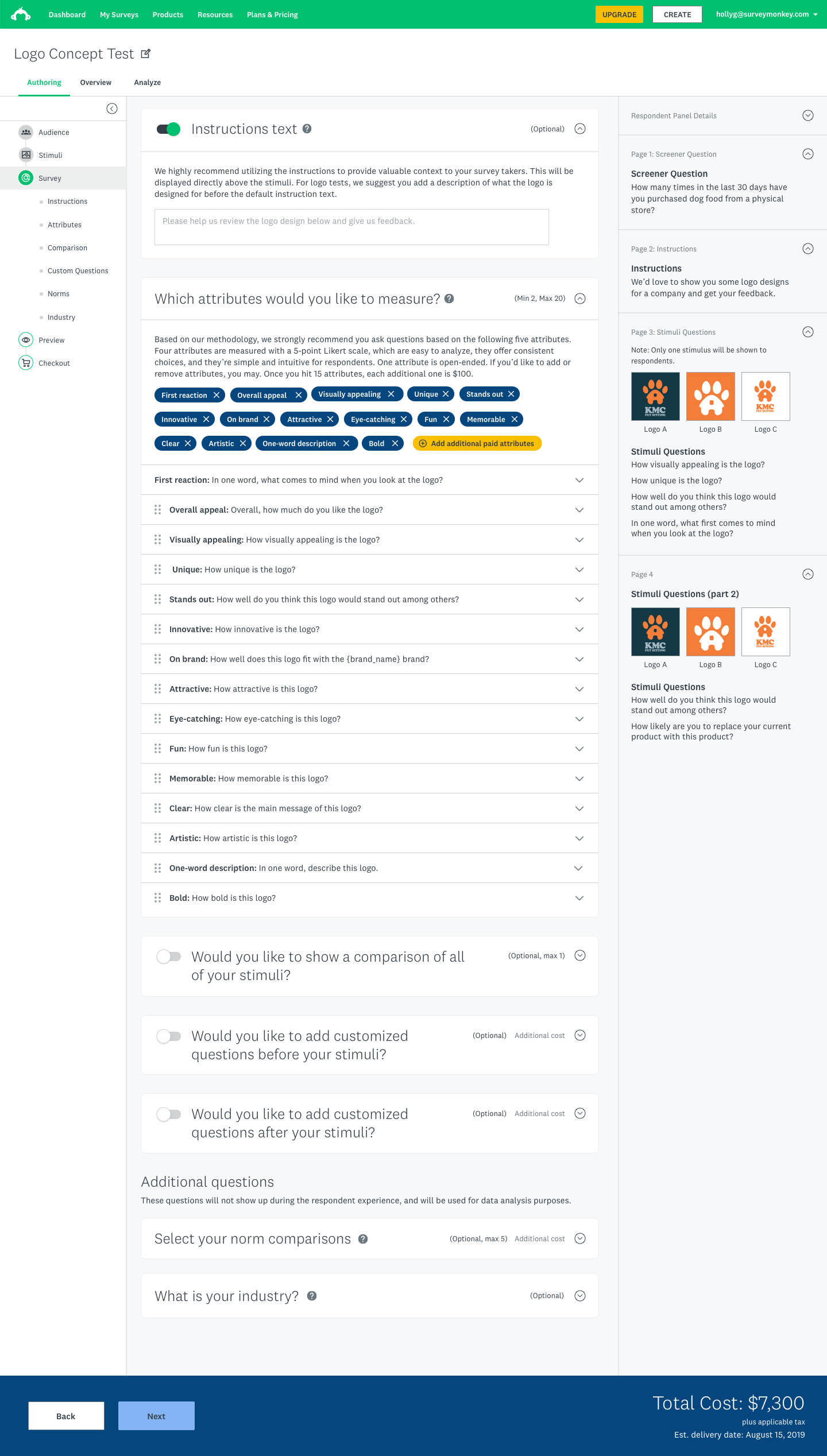

Designing the survey

During the survey step, users can generate instructions for their respondents, select the attributes they want to measure, choose if they want to show a comparison of all stimuli, and whether they want to add custom questions before or after their stimuli are shown.

They also have the options to add norm comparisons for their data, as well as identify the industry they're in. These inputs are used during the analysis stage to deepen the insights we deliver.

Solutioning

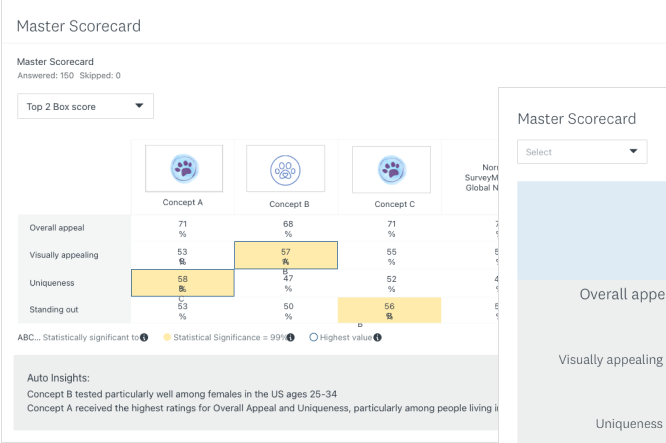

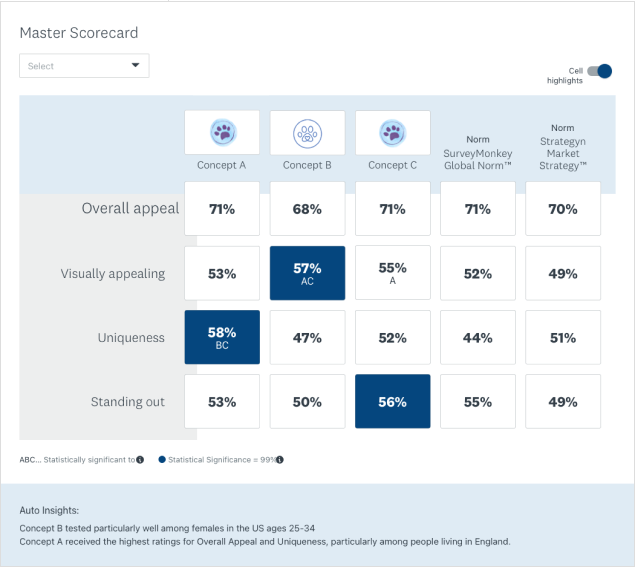

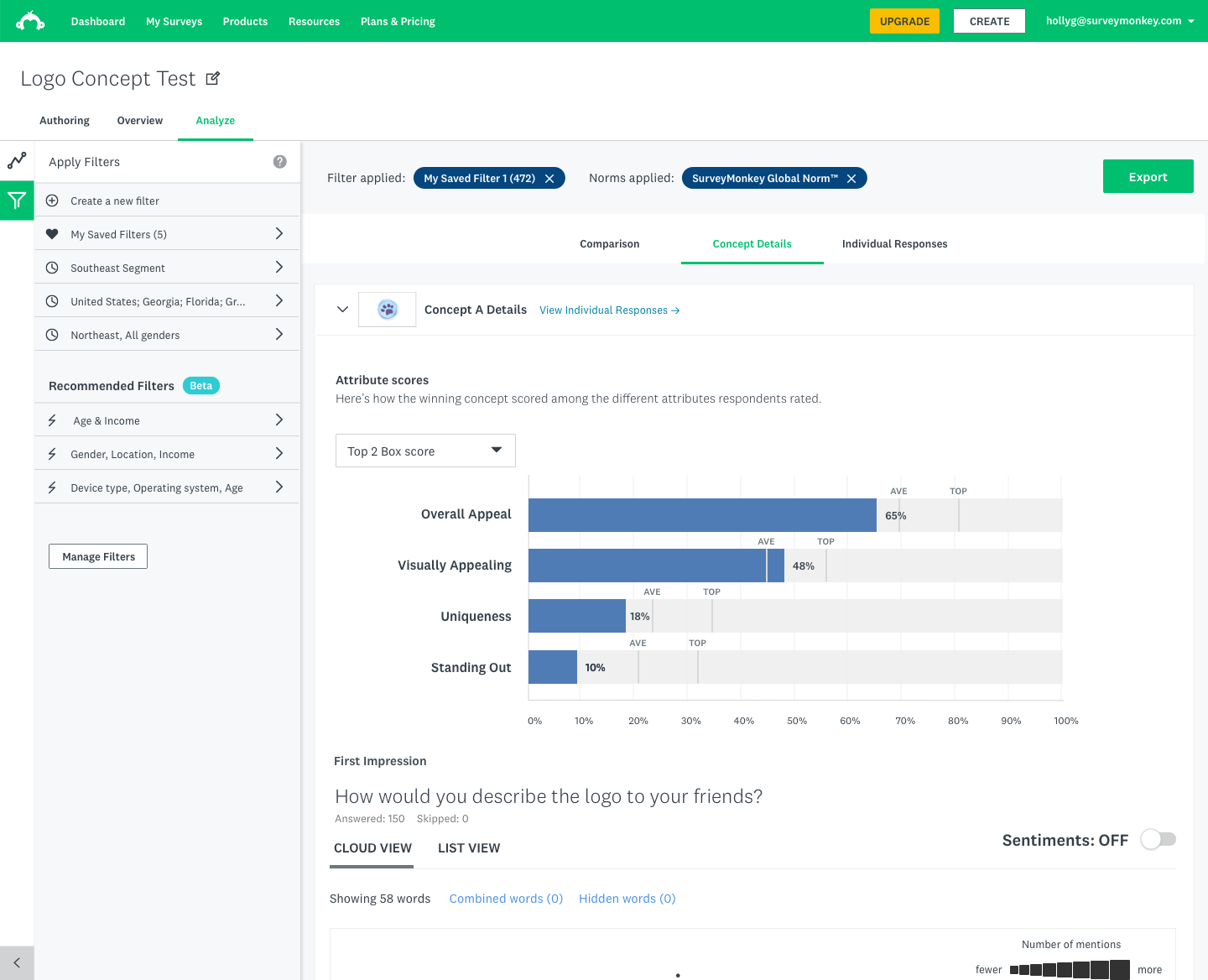

Analyzing and filtering the results

One of the key offerings of this experience was in the ability to analyze the resulting data that was collected during the tests and allow users to look for addition insights on their own.

This allowed them more control over the story they told, as well as allowed users to save complex filtering views they create and apply AI-generated 'recommended' filters. These filters highlighted data outliers and generated additional insights for our market researchers.

How this work was impactful

$3.2B

total addressable market for the new product offering

10 mo

from kickoff to launched product experience

50%

reduction in time to set up demographic targeting after iteration (and that's just one example of how we iterated forward)

30%

further along — we created a framework for executing design at this scale so the next team doesn't start from zero

My end goal is to close the loop with stakeholders and use data to tell the story in a compelling way.

Lyssette C. · UX Research and Customer Insights at Square

Learnings

Iteration will get you there: We applied a lot of rigor to our iteration process, and the efforts paid off. We were able to evolve what started as a proposal of ten markets we might try to go after and ended up as a launched product experience for a new market. One of the key drivers of this were our weekly user testing sessions where we showed user flows and approaches to solutions as we iterated on them to get feedback. Our solutions were backed by research and allowed us to go to market with a high degree of confidence in the solutions we were presenting.

Use today's work to build on tomorrow's challenges: During the process of creating this new offering, the design lead and I created a product design framework that can now be utilized in the future in order to build off the learnings and solutions we had already created doing this work. That way, future efforts will not have to start from the same 'zero' state we did; they'll already have some insights and guidance to start with.